# Image to Text OCR Explained: How It Works

In 1976, Ray Kurzweil demonstrated the first reading machine that could convert printed text into synthesized speech for blind users. The machine cost $50,000 and recognized text at 80 words per minute with occasional errors. Fifty years later, the same task runs on a phone at a thousand words per second with 99 plus percent accuracy on clean print.

The intervening half century of optical character recognition research produced the algorithms, training datasets, and neural architectures that make modern OCR feel magical. This guide explains what is actually happening inside an OCR engine, why accuracy varies so dramatically across inputs, which tools serve which use cases, and how to get the best possible results from your own OCR work.

> "OCR is the oldest problem in computer vision that is still not solved. Every year we get closer to universal accuracy, and every year new document types arrive that break the current models." -- Raymond Smith, Tesseract lead maintainer

## What OCR Actually Does

OCR takes an image containing text and returns character codes. The image might be a scanned document, a photograph of a sign, a screenshot with embedded text, or a PDF rendered to pixels. The output is plain text, sometimes with positional information, sometimes with formatting, depending on the tool.

The problem is hard because the same character can look different in thousands of ways. Font, size, stroke weight, italic versus upright, ink density, paper texture, scan resolution, lighting conditions, and camera angle all affect the pixel pattern that represents a given letter. A good OCR engine tolerates all of this variation while still distinguishing characters that look similar, like lowercase l and uppercase I, or zero and uppercase O.

## The Classical Pipeline

Traditional OCR runs through six stages in order. Understanding the stages helps you diagnose problems when OCR fails.

### Stage 1: Preprocessing

The raw image is cleaned before analysis. Common preprocessing steps include deskew, which rotates the page so lines are horizontal, binarization, which converts grayscale or color to pure black and white, noise removal, which eliminates scattered speckles, and contrast normalization, which handles uneven lighting.

Good preprocessing dramatically improves downstream accuracy. Tesseract and commercial engines include automatic preprocessing by default, but the automatic choices are not always right for difficult scans.

### Stage 2: Page Segmentation

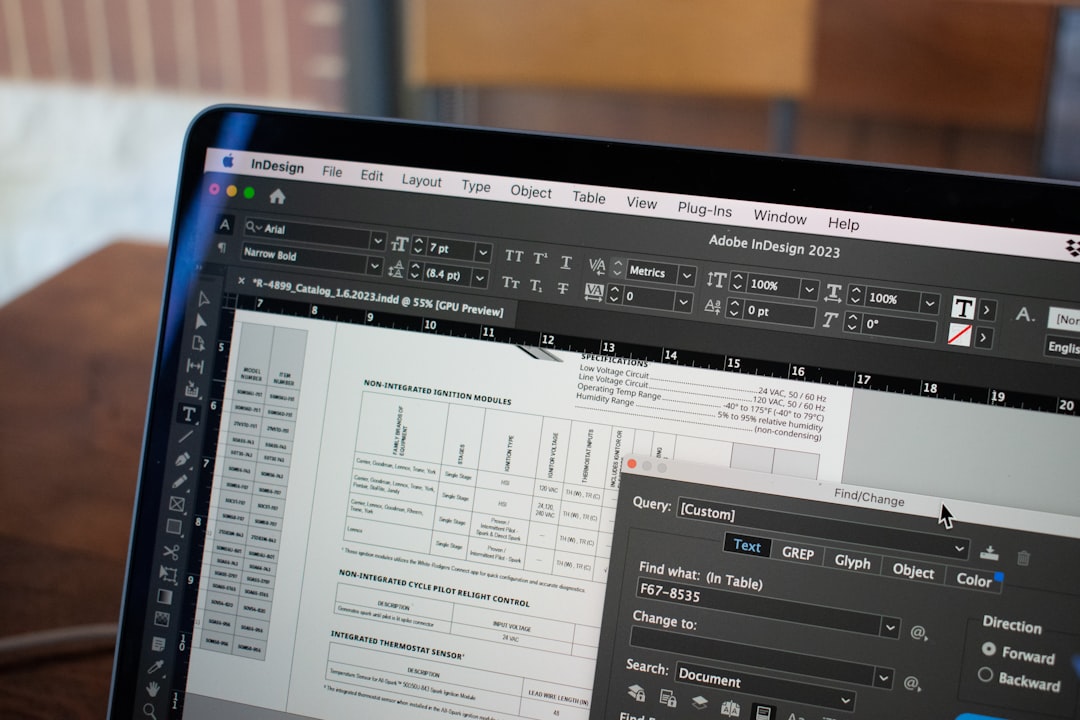

The cleaned image is divided into regions. Text regions are distinguished from images and white space. Text columns are identified. Tables are bounded. Captions are associated with figures. This is the stage where layout understanding happens.

Layout analysis is hard on magazines and newspapers that mix multi column text, floating images, and complex typography. It is easier on plain letters and technical documents with uniform layouts.

### Stage 3: Line Segmentation

Text regions are divided into individual lines. Horizontal projection profiles identify the gaps between lines. Skew correction may need to run again at the line level if the page is unevenly skewed.

### Stage 4: Word and Character Segmentation

Lines are divided into words by identifying gaps wider than the average intra word spacing. Words are divided into characters by identifying vertical boundaries. Connected letters in cursive, touching characters in dense print, and broken characters in low quality scans all complicate this step.

### Stage 5: Feature Extraction or Classification

Each segmented character is classified. The classical approach extracted geometric features and matched them against a reference dictionary. The neural approach processes the pixel grid directly through convolutional or transformer layers and outputs a character probability distribution.

### Stage 6: Post Processing

The classified character stream is corrected using language models. Misrecognized characters often become valid words through spellcheck. Context aware models fix common confusions like rn versus m or cl versus d. Dictionary lookups validate terms.

## The Neural Revolution

OCR accuracy plateaued in the 1990s at around 95 percent on clean print using classical techniques. The jump to 99 plus percent came from neural networks, specifically convolutional neural networks for feature extraction and recurrent or transformer networks for sequence modeling.

Modern engines like Tesseract 5, which added LSTM based recognition in 2018, and commercial offerings from Google, Microsoft, and ABBYY use end to end neural architectures. The input is a line image and the output is a character sequence, with no explicit feature engineering in between.

The table below shows benchmark accuracy on the ICDAR 2019 dataset, which contains a mix of print quality conditions.

| Engine | Accuracy on Clean Print | Accuracy on Degraded |

|--------|-------------------------|----------------------|

| Tesseract 4 (LSTM) | 98.1 percent | 82.3 percent |

| Tesseract 5 | 98.8 percent | 86.7 percent |

| ABBYY FineReader 15 | 99.4 percent | 90.2 percent |

| Google Cloud Vision | 99.6 percent | 92.1 percent |

| Microsoft Azure OCR | 99.3 percent | 89.8 percent |

| Amazon Textract | 99.1 percent | 87.4 percent |

The gap between free and commercial tools has narrowed to under one percentage point on clean print. Commercial tools still lead substantially on degraded inputs and unusual layouts.

## Why Accuracy Varies

OCR accuracy depends on factors that often matter more than engine choice. The variables below account for most observed differences.

Resolution matters first. Below 200 dpi, character details disappear and accuracy collapses. 300 dpi is the minimum for reliable OCR. 600 dpi helps on small text or degraded sources.

Contrast matters second. Faded ink on white paper, dark ink on colored paper, and photocopies of photocopies all reduce contrast and confuse the binarization step. A histogram equalization preprocessing pass helps.

Skew matters third. Pages rotated by more than a few degrees need deskewing before OCR. Modern engines do this automatically, but severe skew above 10 degrees can defeat the automatic correction.

Font matters fourth. Standard serif and sans serif fonts recognize well because training data contains millions of examples. Decorative fonts, historical typefaces, and unusual scripts have thinner training coverage.

Language matters fifth. English and other Latin scripts recognize best because of training data volume. Asian scripts need dedicated models with thousands of character classes rather than the hundred or so in Latin.

## When to Use OCR

OCR is the right tool for specific situations. Using it indiscriminately wastes time.

Use OCR when converting scanned documents to editable text. The whole point of the operation is to unlock content trapped in images.

Use OCR when searching inside image PDFs. Most PDF readers can add an invisible text layer over the image pages that makes the content searchable while preserving visual appearance.

Use OCR when extracting specific data from forms, invoices, or receipts. Modern engines combined with layout analysis can populate spreadsheets from photographed documents.

Do not use OCR on content that was born digital and is available as text. Converting a born digital PDF to image and OCRing back to text loses fidelity for no reason. Use direct text extraction instead.

Writers who archive research sources through [When Notes Fly](https://whennotesfly.com) often face a mix of born digital PDFs and scanned library materials. The workflow branches based on whether text extraction works. If it does, skip OCR. If it does not, run OCR.

> "OCR is the answer when no better answer exists. When better answers exist, use them." -- Jakob Nielsen, usability researcher

## Practical Tool Selection

The right OCR tool depends on volume, sensitivity, and budget.

### Free Online Tools

For occasional single document OCR, free web tools handle the job without installation. Upload an image or PDF, get text back. [File Converter Free OCR](https://file-converter-free.com/image-to-text) accepts images up to 100 MB and PDFs up to 20 pages, supports 60 plus languages, and deletes uploaded content within one hour.

Competing free tools include OnlineOCR.net, i2OCR.com, and NewOCR.com. Each has different file size limits, language support, and layout handling. For a quick job, try two or three and pick whichever produces the cleanest output for your specific document.

### Free Desktop Tools

Tesseract is the standard free desktop OCR engine. It runs on Windows, Mac, and Linux. Installation on macOS through Homebrew is a one line command. On Windows, the official installer handles setup. On Linux, the package manager provides binaries.

Tesseract runs from the command line. tesseract input.png output txt produces an output.txt file with recognized text. Additional flags control language, page segmentation mode, and output format. For casual use, GUI wrappers like gImageReader, NAPS2, and FreeOCR put a graphical interface on Tesseract.

### Commercial Desktop Tools

ABBYY FineReader remains the benchmark for demanding OCR work. Accuracy exceeds Tesseract on almost every document type, layout analysis is substantially better, and format preservation during conversion to Word or Excel is more reliable. The cost is $200 for the home edition.

Readiris, OmniPage, and PDF24 offer competitive commercial alternatives in a similar price range. For specialized needs like mathematical equations or chemistry notation, Mathpix and similar domain specific tools outperform general OCR.

### Cloud OCR APIs

Google Cloud Vision, Microsoft Azure AI Vision, and Amazon Textract offer OCR as a cloud service. Call an HTTP endpoint with an image, get structured results back. Pricing is typically $1 to $3 per thousand pages.

Cloud APIs excel on large batch jobs where self hosted accuracy is insufficient. They also handle complex documents like invoices and receipts that require layout understanding beyond basic OCR.

For regulated content with privacy constraints, cloud APIs may not be appropriate. Teams managing study materials through [Pass4Sure](https://pass4-sure.us) that contain licensed content run Tesseract locally rather than sending content to cloud providers that might retain copies.

## Preprocessing That Improves Accuracy

A few preprocessing steps reliably improve OCR accuracy across most engines. The list below covers the highest impact interventions.

First, deskew. Even 2 degrees of rotation hurts recognition. Tools like ImageMagick with the deskew option or dedicated scanner software usually handle this.

Second, denoise. Scattered black speckles on white backgrounds confuse classifiers. A small median filter or the imagemagick noise reduction removes most of them.

Third, adjust contrast. Low contrast inputs benefit from CLAHE or simple histogram stretching. The goal is clear black text on a clean background.

Fourth, upscale if resolution is below 300 dpi. Neural upscaling with tools like waifu2x or real ESRGAN produces better results than naive bilinear scaling.

Fifth, binarize carefully. Simple threshold works for clean scans. Adaptive threshold like Sauvola or Otsu handles uneven lighting better.

The [File Converter Free image enhancer](https://file-converter-free.com/image-enhance) applies several of these preprocessing steps automatically before OCR, which often produces better results than running the raw image through OCR directly.

## Handling Multi Language Documents

Documents that mix languages need engines configured for all of them. Tesseract accepts multiple language codes in one run. tesseract input.png output l eng+fra+deu runs English, French, and German detection simultaneously.

The accuracy cost is modest for related languages but can be significant when mixing Latin with non Latin scripts. For documents with discrete language sections, better results come from identifying the language of each section first and running OCR with the correct model for each.

Multilingual writers who work through [Evolang](https://evolang.info) on communication across languages routinely encounter documents with English, Spanish, and Portuguese mixed on the same page. Pre segmenting by language before OCR improves accuracy substantially.

## Tables and Structured Data

General OCR produces flowing text. Tables need specialized handling because cell boundaries, column alignment, and row associations all matter.

Commercial engines like ABBYY include table detection. Open source tools like Tesseract with layout analysis handle some tables correctly. Cloud APIs like Amazon Textract and Google Document AI offer dedicated table extraction endpoints.

For forms with structured fields, templating produces better results than generic OCR. A template defines where each field lives on the page. The engine extracts the value from each defined region. This approach is reliable for invoices, receipts, and regulated forms.

Business document workflows at [Corpy](https://corpy.xyz) that process registration forms from dozens of jurisdictions use templated extraction rather than generic OCR, because the forms have stable layouts that templating can exploit.

## Handwriting Recognition

Handwriting is substantially harder than print. Character shapes vary from person to person, writing angle differs, letter connections in cursive fuse characters, and spacing is inconsistent.

Specialized handwriting recognition models trained on handwritten data achieve 85 to 95 percent accuracy on clear writing. Google Cloud Vision, Microsoft Azure, and Amazon Textract all offer handwriting support. Tesseract does not handle handwriting well without custom training.

For personal notes, Apple Live Text and Google Lens both read clear printed handwriting competently. For historical archives with cursive manuscripts, transcription tools like Transkribus combine OCR with crowd sourced correction.

## Accuracy Measurement

Measuring OCR accuracy requires a ground truth. Without knowing the correct text, you cannot know whether the recognized text is right.

The standard metrics are character error rate and word error rate. CER counts the edit distance between recognition and ground truth divided by the length of ground truth. WER counts word level substitutions, insertions, and deletions.

For production deployments, maintaining a test set of representative documents with hand corrected ground truth lets you track accuracy over time. When adopting a new engine or preprocessing step, regression testing against the test set reveals whether accuracy improved or degraded.

## OCR in Research and Archives

Libraries, museums, and research archives use OCR extensively for digitizing historical collections. The challenges include historical typefaces, deteriorated paper, unusual layouts, and non standard orthography.

Large scale projects like the Europeana Newspapers project OCRed millions of historical newspapers with accuracy around 85 percent on average. That accuracy is unacceptable for precise text editing but fully adequate for full text search across historical collections.

Researchers cataloging historical field journals for archives like [Strange Animals](https://strangeanimals.info) that contain wildlife observations from the 19th and early 20th centuries typically combine automated OCR with manual correction of key passages, accepting that fully automated conversion will not reach publication quality.

## Privacy and Compliance

OCR processing on sensitive documents raises privacy questions. Cloud APIs transmit content to third parties. Even local processing may interact with operating system features like cloud sync that inadvertently upload documents.

For HIPAA regulated medical documents, GDPR covered personal data, and similar regulated content, self hosted Tesseract or certified commercial software running offline are the defensible options. Read the privacy policy and data handling commitments of any cloud OCR service before sending sensitive content.

> "The privacy policy of your OCR tool is part of your data protection plan, not a legal afterthought." -- Bruce Schneier, security researcher

## Integration Patterns

OCR fits into larger workflows as a preprocessing step. Common integration patterns include the following.

Searchable PDF. Run OCR on scanned pages and embed the recognized text as an invisible layer over the original image. Users see the familiar scan while full text search works normally. Tools like OCRmyPDF automate this operation.

Text extraction pipeline. Ingest scanned documents, run OCR, extract structured data, and feed the output to downstream systems. Invoice automation, contract analysis, and research indexing all use this pattern.

Accessibility remediation. Convert image based PDFs to text based PDFs so screen readers can navigate the content. Essential for compliance with accessibility regulations.

Mobile note capture. Photograph a whiteboard or a printed page and immediately extract text for editing. Apple Notes, Google Keep, and OneNote all integrate OCR for this use case.

## Scripting Bulk Workflows

Bulk OCR benefits from scripting. A folder of 500 scanned documents is not a job for manual clicking.

OCRmyPDF is the standard tool for bulk PDF OCR. It adds a text layer to each scanned PDF in a folder, preserving visual appearance while making the content searchable. The command is ocrmypdf input.pdf output.pdf, with flags for language, optimization, and deskew.

For images rather than PDFs, a simple shell loop over Tesseract works. Python scripts using pytesseract offer more control, including preprocessing, error handling, and output parsing.

For cloud based bulk processing, tools like Google Document AI, Azure Document Intelligence, and Amazon Textract accept folder level inputs and return structured outputs at scale. Pricing models favor bulk processing with per thousand page rates.

## QR Codes and Barcodes Distinct from OCR

It is worth noting that machine readable codes like QR codes and linear barcodes are not OCR. They use dedicated decoders that exploit the structured format of the code. Tools like zbar and libdmtx handle barcodes, while QR specific libraries handle QR codes. The free [qr-bar-code.com](https://qr-bar-code.com) site generates and decodes these codes without any OCR step.

OCR would handle QR codes poorly because the visual pattern is not text. Match the tool to the problem.

## Checking Your OCR Output

Every automated OCR output needs review. Five checks catch most problems.

First, look at the first and last pages. OCR often degrades at page boundaries where layout analysis struggles. Second, search for obvious artifacts like rn that should be m or cl that should be d. Third, verify numbers. Single digit errors in financial documents are catastrophic and often come from OCR misreading. Fourth, verify proper nouns. Names of people, places, and organizations often misrecognize because they are outside the language model vocabulary. Fifth, spot check random pages to catch systematic errors that affect the whole document.

For legally significant documents, a second human reviewer should repeat the checks. For archival projects, accept that 100 percent accuracy is impossible and plan for ongoing correction as users surface errors.

## Cognitive Implications

OCR has broader cognitive implications beyond converting files. Making text searchable transforms how people interact with document archives. Content that was previously locked in image form becomes discoverable through keyword search.

Researchers at [What's Your IQ](https://whats-your-iq.com) who study information retrieval note that searchable OCR converted archives shift user behavior toward keyword browsing rather than linear reading. This is a mixed blessing. Discoverability improves dramatically, but depth of engagement with specific documents decreases.

The broader trend is toward full text searchability of every document humans have ever written. OCR is the bridge between the image based record of the past and the text based searchability of the future.

## References

1. Smith, R. (2007). An overview of the Tesseract OCR engine. Ninth International Conference on Document Analysis and Recognition. DOI: 10.1109/ICDAR.2007.4376991

2. Graves, A., Liwicki, M., Fernandez, S., Bertolami, R., Bunke, H., Schmidhuber, J. (2009). A novel connectionist system for unconstrained handwriting recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 31(5). DOI: 10.1109/TPAMI.2008.137

3. Breuel, T. M. (2017). High performance text recognition using a hybrid convolutional LSTM implementation. ICDAR. DOI: 10.1109/ICDAR.2017.12

4. Kurzweil, R. (1990). The Age of Intelligent Machines. MIT Press.

5. ICDAR (2019). International Conference on Document Analysis and Recognition proceedings. IEEE.

6. Shi, B., Bai, X., Yao, C. (2017). An end to end trainable neural network for image based sequence recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, 39(11). DOI: 10.1109/TPAMI.2016.2646371

7. Baek, Y., Lee, B., Han, D., Yun, S., Lee, H. (2019). Character region awareness for text detection. IEEE CVPR. DOI: 10.1109/CVPR.2019.00959

Frequently Asked Questions

What accuracy can modern OCR achieve?

Clean 300 dpi printed text in common fonts reaches 99 plus percent accuracy. Handwriting, poor scans, and unusual fonts are substantially lower.

Does OCR work offline?

Yes. Tesseract and several commercial engines run entirely on device without sending data to any server, which matters for sensitive documents.

Can OCR read handwriting?

Yes, but only with models trained on handwriting. General OCR engines trained on print produce poor results on cursive text.

Ready to Convert Your Files?

Use our free online file converter supporting 240+ formats. No signup required, fast processing, and secure handling of your files.

Convert Files